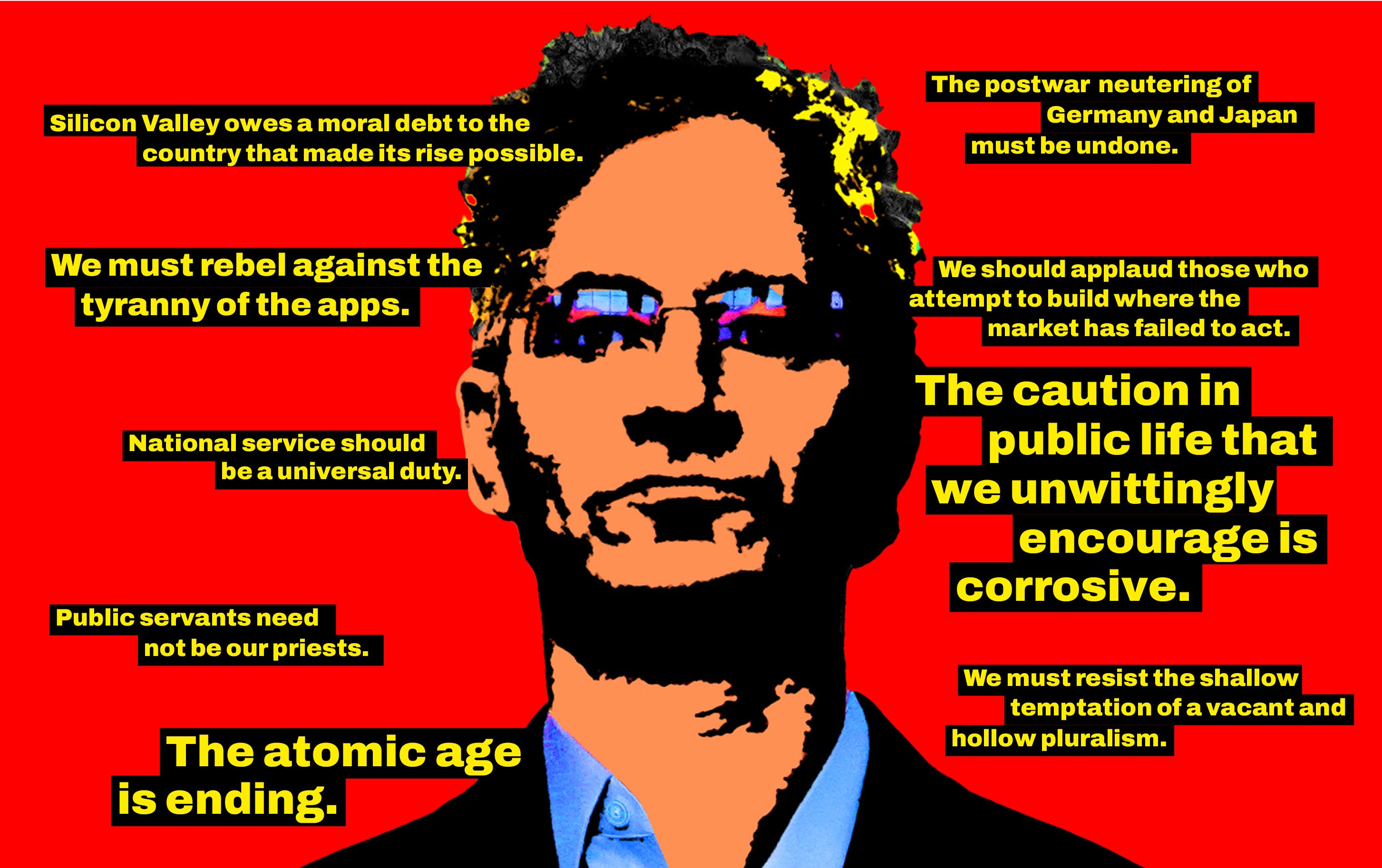

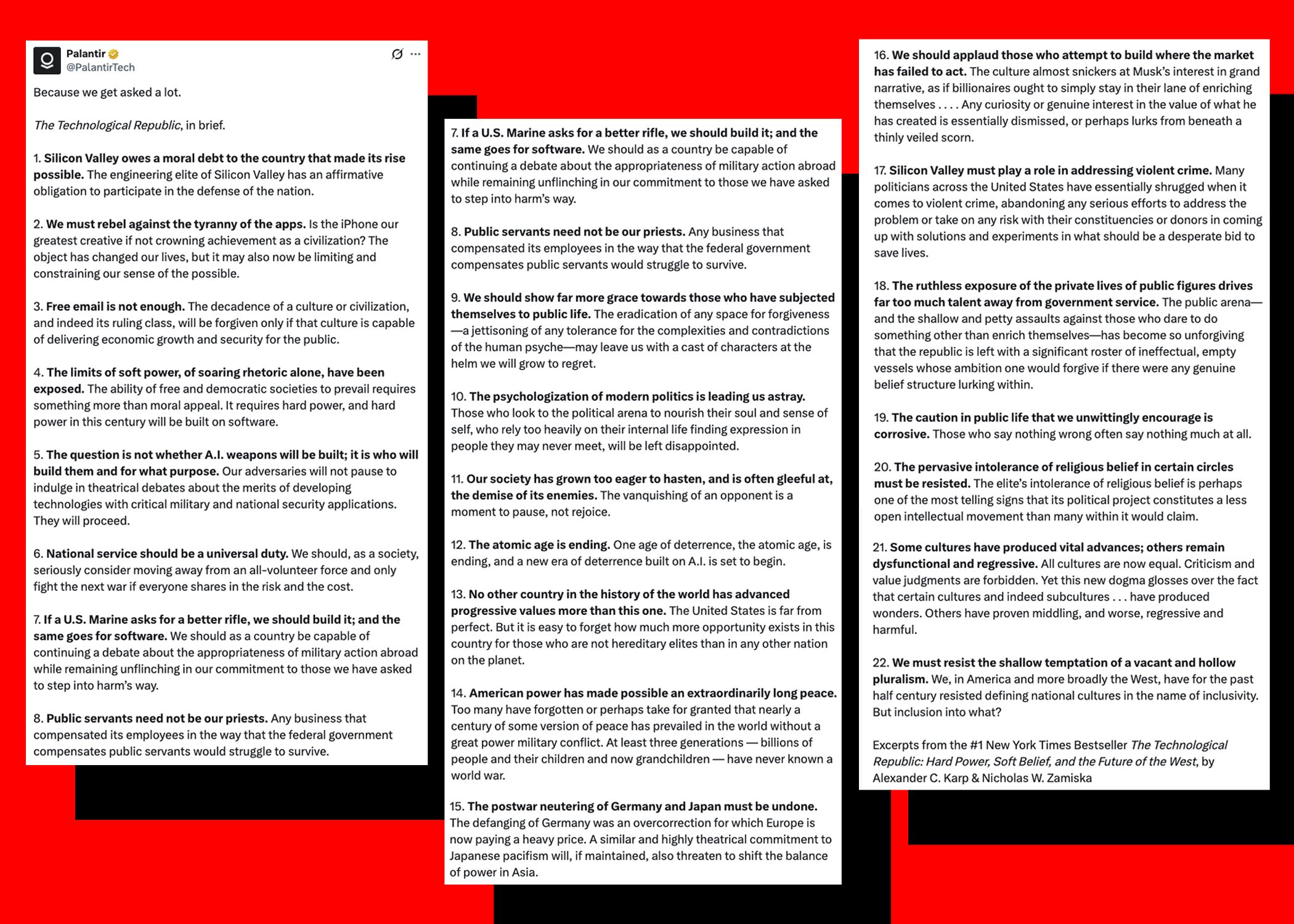

The recent 22-point post on X by Palantir Technologies outlining the philosophy of its co-founder and CEO Alex Karp – on everything from compulsory national service to a new age of deterrence built in AI – is quite an event. It’s one thing for a government or political party to articulate and compete over a political vision: that’s expected, even mandatory. It’s another for a private company, especially one deeply embedded in state security and surveillance, to do so. This is not just advertisement by a leading global tech arms dealer. It’s a manifesto. And for any friend of democracy, reading it is like opening a food item that you suspected has gone off, but you didn’t know it was that much off.

Palantir, led by Alexander Karp and founded by Peter Thiel, is not a political thinktank. It is not an elected body. It’s not accountable to the public. It’s a contractor: a tech firm that builds powerful software and data infrastructure used by militaries, intelligence agencies and law enforcement around the world. When such a company begins to speak in sweeping ideological terms about the direction society should take, it raises questions, and rightly so.

But it’s the content, tone and subtext of the post – distilled from Karp’s book The Technological Republic: Hard Power, Soft Belief and the Future of the West – that makes it especially unsettling and why we must pay attention. Rather than sticking to product announcements, it advances a worldview. A political ideology, and a very particular one: one that is openly hostile to liberal democracy, rejects pluralism, inclusion, and empathy, instead embracing “hard power” (read: violence) and permanent warfare (ideal if you’re an arms dealer), calling for sacrifices for the nation and drafting people into military service, cracking down on crime, welcoming religion in the realm of power, dismissing the equality of cultures in favour of western supremacy and elitism, deeming interiority and reflection unnecessary when it comes to the masses (that’s reserved for the elite), promoting collaboration between Big Tech and state, endorsing the suppression of dissent by means of a surveillance system that always knows how to find you, demanding the rearmament of Germany and Japan, and arguing for technological dominance over the enemies of the state.

Not the usual language of tech, not even Big Tech. If this sounds familiar, it should. The glorification of strength, warfare and the nation, the subordination of citizens to the state, and the entanglement of corporate and state power rings a very specific bell: a fascist one. Silicon Valley has been drifting in that direction for some time now – think of Elon Musk, Peter Thiel, and others. But now things are getting more consequential.

Technological elites begin to function as quasi-political authorities. The engineer, the data scientist, but especially the billionaire, is recast as arbiter of social order

In my book Why AI Undermines Democracy and in my paper on technofascism, I warned against a political trajectory in which digital technologies do not merely support governance but begin to reshape it in authoritarian directions. The danger is not only overt repression and warmongering but also a subtler transformation: the normalisation of surveillance, the delegation of judgment to opaque systems, and the quiet concentration of power in the actors who design and control these infrastructures.

Palantir does not only exist to make money. With its ties to state power, and in particular the Trump regime, the goal is power accumulation. Not so much for the state in question, but for the tech executives themselves. Technological elites begin to function as quasi-political authorities without democratic legitimacy. The engineer, the data scientist, but especially the billionaire, is recast as arbiter of social order. Who needs a parliament?

There is something distinctly authoritarian in the subtext of Palantir’s post. The emphasis on total visibility, on integrating disparate data streams into a single operational picture, on enabling faster and more decisive action. From a business and engineering perspective, all this can be framed as a call for efficiency. But efficiency, what Karp’s beloved Frankfurt School – he studied under Jürgen Habermas – called instrumental rationality, can become a political value that overrides others: deliberation, pluralism, dissent. In such a system, the friction of democratic processes is not a feature but a bug to be engineered away. This belief does not arrive wearing the obvious symbols of 20th-century authoritarianism; it comes dressed as security, innovation, optimisation and progress.

Palantir’s manifesto frames its tech as a response to the lack of order and security: the belief that advanced technology can and should be used to impose order on a complex, unruly world, guided by those who build and understand these systems.

The tech imperium envisaged here is put forward as an answer to a particular framing of the problem: a framing introduced by Hobbes in the 17th century and further developed by German political theorist Carl Schmitt – who provided legal and philosophical cover for the Nazi regime. Hobbes held the pessimistic view that without authoritarian order, humans don’t manage to live together. He justified absolute state authority as the force that could restore order. A Leviathan to rule over people. Palantir’s answer to chaos at the global level is similar. The message to their clients is: make sure you’re the winner, dominate, and order is restored. Forget multilateralism; become the strongest and impose your order on all others.

Tech is the ideal tool for that: you don’t need to talk to people, try to convince them, argue with them. Habermas is passé; Schmitt is back. You just need to make sure you’re the strongest. The aim is to make “software that dominates”, as Palantir puts it on its X account profile. In other words, it aims to build the new Leviathan: the Hobbesian monster that guarantees security, but that comes at the price of freedom and democracy. Karp and Thiel are prepared to pay that price; or rather, they want you to pay it.

The most troubling part is that this vision is not hypothetical. Palantir and its political allies have already partly implemented it. Predictive policing tools shape how law enforcement allocates resources. Immigration systems rely on AI to track and categorise individuals. Military operations increasingly depend on real-time data fusion platforms and AI is used to select targets for air strikes. Palantir’s software is a central part of this ecosystem. It’s used by the US government and Israel, but also by law enforcement in the EU and UK, and in Britain’s NHS. When the company describes a world organised around these capabilities, it is not imagining the future: it is describing the present, just extended and intensified. The contracts are signed. People have been detained. Bombs have fallen.

This is a gradual, infrastructural shift, not a sudden break into authoritarianism, but a slow recalibration of what feels normal via the entanglement of tech with power. The more these systems are embedded, the more their underlying assumptions – about control, visibility, and power – fade into the background. The problem is structural. Once the violence and technocracy are normalised, the way back to democracy narrows.

But this is not inevitable. We can and must defend democracy. In a healthy democracy, the direction of society is contested in public, through institutions designed – however imperfectly – to reflect the will of the people. Private tech companies have every right to participate in that conversation. But when their participation takes the form of promoting a model that concentrates power in the very systems they control, scepticism and resistance are not only warranted but necessary. Palantir’s post offers us a glimpse of the technofascist trajectory: not as a distant possibility, but as a world already under construction.

Perhaps that’s why it all sounds so confident. Karp is a happy man.

Mark Coeckelbergh is professor of philosophy at University of Vienna. His new book is called Artificial Religion: On AI, Myth and Power (MIT Press). This is an edited version of a post from his Medium blog